Gpu 1 detected dead что это

![]()

Войти

Нет аккаунта? Зарегистрироваться

Авторизуясь в LiveJournal с помощью стороннего сервиса вы принимаете условия Пользовательского соглашения LiveJournal

black_eric

О жизни и компах

Name: black_eric

Page Summary

Latest Month

Entry Tags

14th-Feb-2021 06:50 pm

Биток пошел на рекорды.

Жалею, что год назад побоялся рискнуть 200 баксов и купить один эфир :(.

Подумал сегодня не помайнить ли. За 2 года все изменилось:

0. exmo.me после года с лишним неактивности 09.01.2020 списал все что у меня было. Было рублей 50 в сумме, но все равно обидно.

1. Dwarfpool помер.

2. Claymore-Dual-Miner, которым я пользовался, тоже.

3. Скачал teamredminer-v0.8.1-win. На моем рабочем RX550 4GB он работать нормально отказался:

[2021-02-14 18:47:51] Pool eu1.ethermine.org received new job. (job_id: 0xa54952c872d. diff 0.931 / 4000 MH)

[2021-02-14 18:47:53] GPU 0: detected DEAD (01:00.0), no restart script configured, will continue mining.

[2021-02-14 18:47:53] Please use command line argument —watchdog_script to handle dead GPUs.

[2021-02-14 18:47:53] —————————————- —————————————- —————-

[2021-02-14 18:47:53] Mining ethash with 1 GPU workers

[2021-02-14 18:47:53] GPU PCIe CUs CoreMHz SocMHz MemMHz TEdge TJct TMem FanPct FanRpm VDDC ETH Cfg

[2021-02-14 18:47:53] 0 01:00.0 8 1183 0 1750 40C 40C 40C 0.00% 0 956 mV A4294967264 (*)

[2021-02-14 18:47:53] (*) Gpu is still tuning.

[2021-02-14 18:47:53]

[2021-02-14 18:47:53] Stats Uptime: 0 days, 00:00:31

[2021-02-14 18:47:53] —————————————- — GPU Status —————————————- —

[2021-02-14 18:47:53] GPU 0 [40C, fan 0%] ethash: 0.000 h/s, avg 0.000 h/s, pool 0.000 h/s a:0 r:0 hw:0

[2021-02-14 18:47:53] Total ethash: 0.000 h/s, avg 0.000 h/s, pool 0.000 h/s a:0 r:0 hw:0

[2021-02-14 18:47:53] —————————————- — Pool Status —————————————- —

[2021-02-14 18:47:53] eu1.ethermine.org ethash: 0.000 h/s, avg 0.000 h/s, pool 0.000 h/s a:0 r:0

[2021-02-14 18:47:53] —————————————- —————————————- —————-

[2021-02-14 18:47:55] Pool eu1.ethermine.org received new job. (job_id: 0xe8b0ca4b237. diff 0.931 / 4000 MH)

4. Vega 11 встроенную в Rizen вообще не опознал.

На форумах рекомендуют какую-то HiveOS, но у меня под это железа нет.

Цены на видеокарты — космос и 4GB для майнинга уже мало.

Так что как еще 2-3 года назад между делом уже не помайнишь.

- Tags: bitcoin, компьютеры

промо black_eric сентябрь 11, 18:29 5

Информация об этом журнале

- Цена размещения 10 жетонов

- Социальный капитал 108

- В друзьях у

- Длительность 24 часа

- Минимальная ставка 10 жетонов

- Посмотреть все предложения по Промо

Я сильно удивлюсь если здесь будет хоть один ответ, но все же. Зачем лететь на Седну? Ну кроме того, что мы там еще не были, а для меня лично это обосновывает любую поездку.

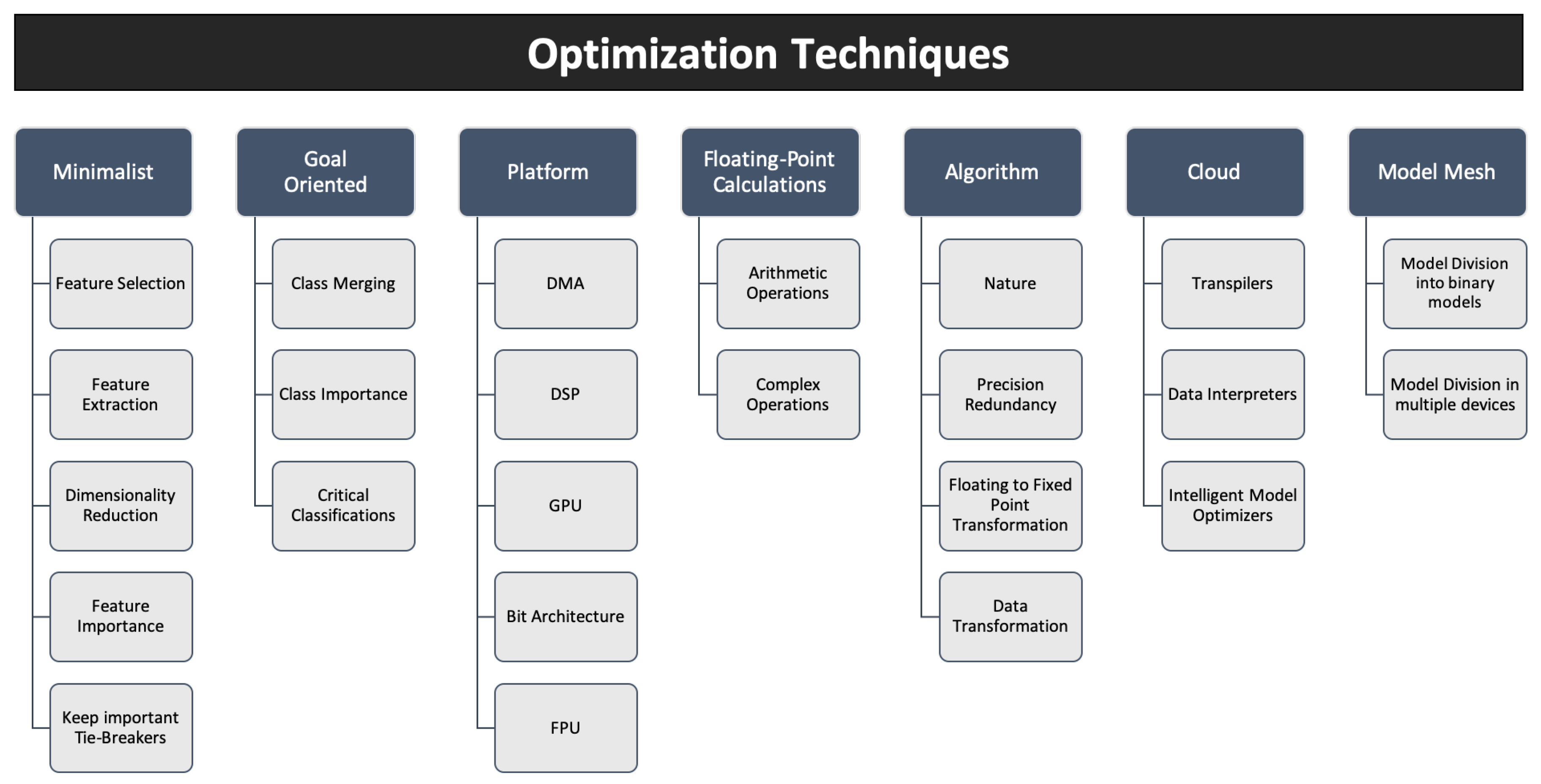

Детекция машин на мобилке в 2023. С какими проблемами мы столкнулись и как решали

Задача детекции объектов на видео или фото — это распространенная задача, для решения которой уже есть множество решений. Но она становится чуть труднее, если мы хотим сделать это в режиме реального времени на не самом мощном мобильном устройстве. В данной статье мы делимся своим опытом создания приложения для детекции атомобилей на мобильном устройстве в режиме реального времени, разработанного на C++ с использованием TensorFlow-Lite и Qt.

TensorFlow-Lite

TensorFlow-Lite — это библиотека, созданная Google, для разворачивания различных моделей на мобильных устройствах, микроконтроллерах и других устройствах. Библиотека поддерживает несколько языков программирования: C++, Java, Swift, Objective-C, Python.

Для TensorFlow-Lite мы можем использовать, как уже готовые модели, например, TensorFlow-

Hub, так и собственные модели, которые были конвертированы в TensorFlow-Lite модель. Во втором случае, возможно, понадобится оптимизировать модель.

Модели делятся на два типа:

- Модели с метаданными. Модель с метаданными может использоваться в библиотеке TensorFlow-Lite Support. Там содержатся различные решения многих задач. Например, там есть решение для задачи детекции объектов. Библиотека сама сделает необходимые операции над входным изображением и предоставит ответ в виде специального класса.

- Модель без метаданных. Для модели без метаданных мы должны будем сами обрабатывать изображение и считывать ответ по указателю.

Как TensorFlow-Lite оптимизирует модели

Оптимизация — важный этап для успешного запуска модели на мобильном устройстве и не только. Благодаря ей мы можем уменьшить:

- Вес самой модели. Это позволит разворачивать модель быстрее;

- Использование оперативной памяти;

- Время вывода модели и, следовательно, уменьшить энергопотребление.

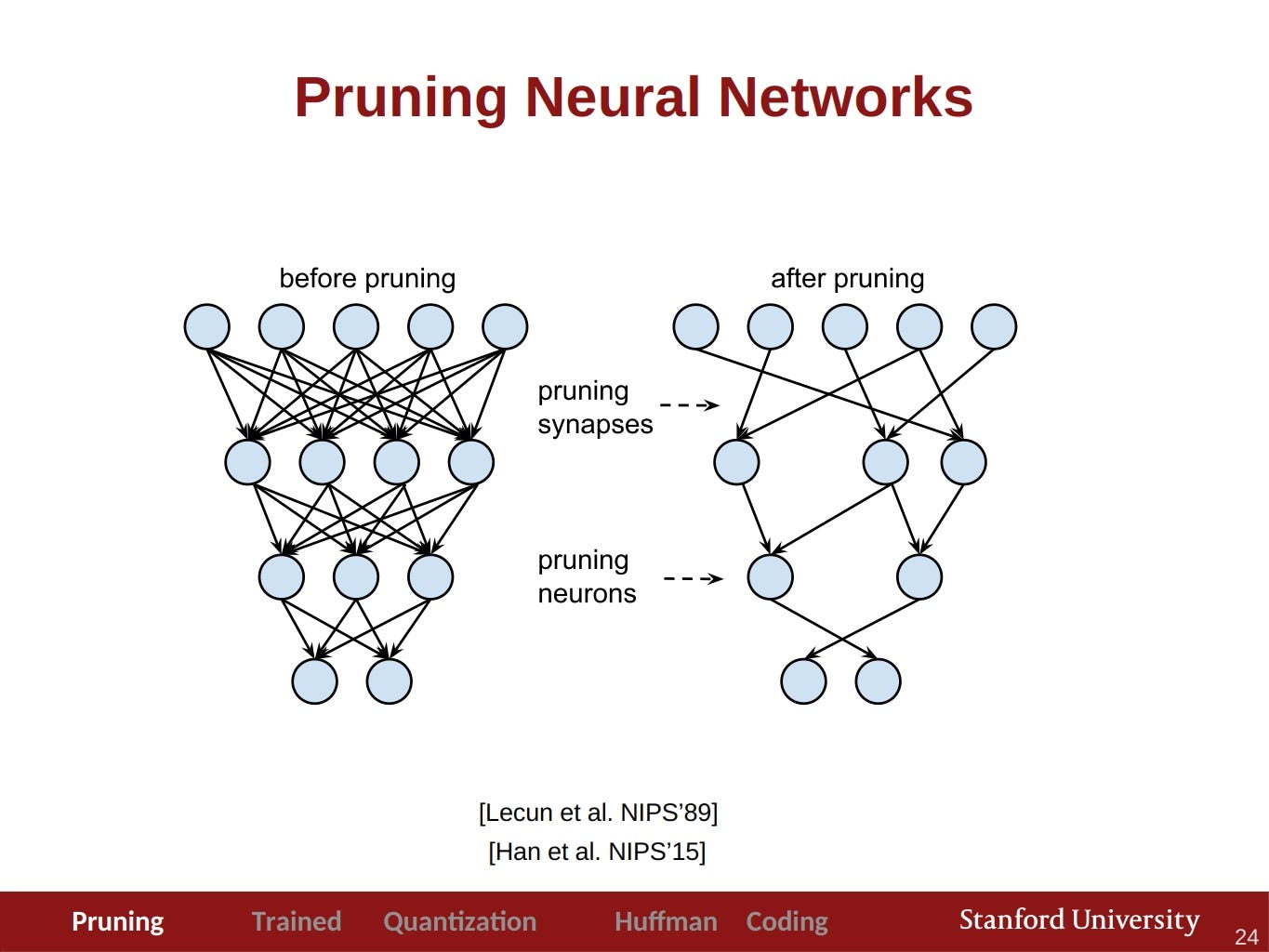

Существует множество различных методов оптимизации и каждый из них стоит изучить. При использовании уже обученной модели, мы можем использовать только некоторые техники, например:

- Pruning — обрезание избыточных частей сети для ускорения inference без потери точности. Посмотреть про него можно здесь, а почитать здесь.

- Кластеризация весов — это метод уменьшения объема хранения вашей модели путем замены многих уникальных значений параметров меньшим количеством уникальных значений.

- Квантизация — это процесс уменьшения точности чисел, используемых для представления весов модели (обычно представленных в формате float32), путем преобразования их в целочисленный тип. Но из-за неё мы теряем точность. Немного подробнее можно прочесть в этой статье Master the Art of Quantization: A Practical GuideExploring and Implementing Quantization Methods with TensorFlow and PyTorch

Также приведем полезную статью по оптимизации моделей, там также есть объяснение этих способов. TensorFlow предоставляет свой toolkit для оптимизации моделей и в него уже входят эти способы. В данном проекте мы будем рассматривать уже оптимизированную модель для мобильных устройств.

Разработка приложения

Как уже говорилось ранее мы разрабатываем приложение на Qt с использованием Qt Quick и TenosrFlow-Lite.

Про сборку TensorFlow-Lite можно почитать в этой статье и в официальной документации. Но у нас будут некоторые отличия. Мы не будем использовать qmake и opencv, как сделано в указанной статье. Вместо qmake мы будем использовать cmake, а вместо opencv мы будем использовать инструменты из Qt. Также мы будем по-другому собирать gpu делегаты. Их можно собрать используя эту команду

bazelisk build -c opt --config android_arm64 tensorflow/lite/delegates/gpu:libtensorflowlite_gpu_delegate.so

После того как мы скомпилировали библиотеки, нам необходимо их подключить.

Сначала добавим заголовочные файлы. В нашем случае мы добавили TensorFlow, как git submodule, тогда мы можем подключить их с помощью include_directories(. ) .

include_directories( headers/ third-party/tensorflow third-party/flatbuffers/include ) Теперь необходимо подключить сами библиотеки. Сначала добавим их используя add_library .

add_library(tensorflowlite SHARED IMPORTED) add_library(tensorflowlite_gpu_delegate SHARED IMPORTED) После этого нам необходимо указать путь к каждой библиотеки через set_property .

# Setup tensorflowlite property set_property(TARGET tensorflowlite PROPERTY IMPORTED_LOCATION $/lib/tensorflow-lite/arm64/libtensorflowlite.so) # Setup tensorflowlite deleagte property set_property(TARGET tensorflowlite_gpu_delegate PROPERTY IMPORTED_LOCATION $/lib/tensorflow-lite/arm64/libtensorflowlite_gpu_delegate.so) И подключить их используя target_link_libraries . Также библиотеки нужно скопировать в $/android-build/libs/arm64-v8a/ . В этой папке содержатся библиотеки, которые затем будут добавлены в apk.

file(GLOB libraries "$/lib/tensorflow-lite/arm64/*") file(COPY $ DESTINATION $/android-build/libs/arm64-v8a) Выбор модели

Одно из мест, где можно найти модель — TensorFlow Hub. Там уже содержатся модели, подготовленные для мобильных устройств. Мы остановились на EfficientDet-Lite0. У данной модели есть несколько версий: обычная, квантованная int8, с метаданными. Мы использовали, как обычную, так и квантованную. Также мы сравнили эти две модели.

Model\Time inference ms.

В итоге наблюдений было замечено, что квантованная модель работает стабильнее, т.е нет скачков времени при inference. В обычной модели это было более выражено. Также не будем исключать, что возможно проблема находится в тестовом устройстве (тестировалось на Realme 8i).

Анонс от TensorFlow-Hub

На момент написания статьи мы использовали TensorFlow Hub для поиска моделей. Но с 15 ноября модели будут находится в Kaggle Models. Подробнее про это можно почитать в их анонсе.

TensorFlow-Lite в коде

Рассмотрим как использовать данную библиотеку. Сначала объявим необходимые переменные.

std::unique_ptr mInterpreter; std::unique_ptr mModel; tflite::ops::builtin::BuiltinOpResolver mResolver; TfLiteDelegate *mDelegate; uchar *mInput;

Дальше мы создадим саму модель.

// Init model mModel = tflite::FlatBufferModel::BuildFromFile(pathToModel.c_str()); // Build the interpreter tflite::InterpreterBuilder builder(*mModel, mResolver); // If set to the value -1, the number of threads used // will be implementation-defined and platform-dependent. builder.SetNumThreads(-1); auto status = builder(&mInterpreter); qDebug() AllocateTensors(); qDebug() if (status == kTfLiteOk) mInput = mInterpreter->typed_input_tensor(0);

Сделать inference мы можем следующим образом.

/** * Performs the forward pass of the TFModel using the given QImage. * @param image The input image to be processed by the model. * @return A std::map containing the model's output. */ std::map TFModel::forward(const QImage &image) noexcept < if (mInput == nullptr) < qWarning() ; > const auto transformedImage = transform(image); const auto *inputImage = transformedImage.bits(); if (inputImage == nullptr) < qWarning() ; > std::memcpy(mInput, inputImage, constants::model::size); if (const auto status = mInterpreter->Invoke(); status == kTfLiteOk) return processOutput(); qWarning() ; >

В данном фрагметне можно увидеть метод transform . Он позволяет уменьшить размер изображения до нужного нам, а также поменять его формат.

QImage TFModel::transform(const QImage &image) const noexcept < QImage inputImage; // For these model imgFormat equal `QImage::Format_RGB888`. // Important set the correct image format, otherwise ower predictons will // be wrong. inputImage.convertTo(constants::model::imgFormat); return inputImage; >

С помощью метода ниже мы можем получить предсказания модели.

/** * Retrieves the predictions from the model output. * @return A map of predicted classes and their max confidence scores. * If no predictions were made, an empty map is returned * If on image detected few objects of the same class, * then only the max score from it will be returned */ std::map TFModel::processOutput() const noexcept < // Model output: // detection_boxes: Bounding box for each detection. // detection_classes: Object class for each detection. // detection_scores: Confidence scores for each detection. // num_detections: Total number of detections. // Get the total number of detected objects const int countDetected(std::floor(*getOutput(3)))>; // Get the detected classes and scores const auto detectedClasses; const auto detectedScores; // Check if the detectedClasses and detectedScores are valid bool itUsable; itUsable &= detectedScores != nullptr; // Initialize the map to store the predictions std::map predictions; // Iterate over the detected objects // In this model, countDetected cannot be more than 25. for (int i = 0; itUsable && (i < countDetected); i++) < const auto &classId = static_cast(std::floor(detectedClasses[i])); const auto &score = static_cast(detectedScores[i]); const bool itDetected= constants::model::threshold>; // If the object is detected with a high enough confidence score if (itDetected) < // Add the class to the predictions map if it doesn't exist predictions.try_emplace(classId, 0); // Update the max score for the class if necessary predictions[classId] = std::max(predictions[classId], score); >> return predictions; >

В данном фрагменте можно заметить метод getOuput . Данный метод эквивалентен mInterpreter->typed_output_tensor(numOutput) и был введен для улучшения читаемости кода.

С какими проблемами мы столкнулись

Одна из проблем, с которой мы столкнулись — камера. Она работала без всяких проблем на настольных системах, но не на мобильных. Во многих релизах она просто не работала и приложение аварийно завершалось. Пример ошибки на версии 6.5.2.

W ImageReader_JNI: Unable to acquire a buffer item, very likely client tried to acquire more than maxImages buffers W ImageReader_JNI: Unable to acquire a buffer item, very likely client tried to acquire more than maxImages buffers E AndroidRuntime: FATAL EXCEPTION: CameraBackground E AndroidRuntime: Process: org.qtproject.example.camera, PID: 16762 E AndroidRuntime: java.lang.IllegalStateException: maxImages (10) has already been acquired, call #close before acquiring more. E AndroidRuntime: at android.media.ImageReader.acquireNextImage(ImageReader.java:501) E AndroidRuntime: at android.media.ImageReader.acquireLatestImage(ImageReader.java:386) E AndroidRuntime: at org.qtproject.qt.android.multimedia.QtCamera2$4.onImageAvailable(QtCamera2.java:157) E AndroidRuntime: at android.media.ImageReader$ListenerHandler.handleMessage(ImageReader.java:812) E AndroidRuntime: at android.os.Handler.dispatchMessage(Handler.java:108) E AndroidRuntime: at android.os.Looper.loop(Looper.java:166) E AndroidRuntime: at android.os.HandlerThread.run(HandlerThread.java:65)

По этому багу уже ведется работа и qt уже о нем известно. Сам тикет на баг.

bugreports.qt.io

Этот баг можно поправить, но потребуется пересобирать qt для этого. Один из вариантов — это сменить версию qt. Мы попробовали 6.2, 6.3, 6.4, 6.5. Из этих версий только в 6.4 камера работала без всяких проблем.

Также мы столкнулись с ещё одной проблемой. В вертикальном положении изображение было растянуто. Это удалось исправить самостоятельно указав соотношение сторон.

Захват картинки с камеры

Рассмотрим, как захватить изображение с камеры и на что стоит обратить внимание. Захват изображения мы будем делать с помощью Qt Quick и QtMultimedia.

MediaDevices < id: mediaDevices >CaptureSession < camera: Camera < id: camera cameraDevice: mediaDevices.defaultVideoInput active: true focusMode: Camera.FocusModeInfinity >videoOutput: preview > VideoHandler < id: handler videoSink: preview.videoSink >VideoOutput < id: preview property double aspectRation: 1 anchors.centerIn: parent height: parent.height width: aspectRation * height fillMode: VideoOutput.Stretch onFrameUpdated: < aspectRation = preview.videoSink.videoSize.height / preview.videoSink.videoSize.width >> Рассмотрим это фрагмент кода. В нем: MediaDevice позволяет выбрать камеру, CaptureSession делает захват изображения, VideoOutput делает превью нашего видео потока, а VideoHandler — это наш класс, который нужен, чтобы получить картинку с видео потока и отправить её в модель.

Получить изображение можно следующим образом mVideoSink->videoFrame().toImage() , где mVideoSink — QPointer . Именно его мы установили в VideoHandler .

Здесь есть пара замечаний. Работу с моделью будет лучше вынести в отдельный поток, чтобы избежать застывание изображения. Также лучше проводить inference модели не каждое обновление кадра, а с промежутками. Для этого мы создали таймер с интервалом в 1 секунду. В результате каждую секунду мы делали inference модели.

Делегаты

Делегаты позволяют ускорить inference модели за счет использования GPU и DSP. В TensorFlow-Lite существуют несколько типов делегатов.

- GPU делегаты. Поддерживаются android и ios.

- NNAPI и Hexagon делегаты. Поддерживают только android.

- Core ML делегаты. Поддерживают только ios.

Более подробно про них можно почитать в документации.

TensorFlow Lite Delegates

В нашем проекте мы использовали GPU делегаты. Но мы столкнулись с небольшой проблемой. В Выбранной нами модели, были кастомные операторы. В результате этого только часть операций выполнялась на GPU и это не принесло значительных улучшений. Это также можно заметить в benchmark-е к нашей модели.

Gpu 1 detected dead что это

NVIDIA RTX является самой продвинутой платформой для работы технологий трассировки лучей и искусственного интеллекта, которые преображают и улучшают игровой процесс и работу приложений для создания контента. Благодаря RTX более 500 популярных игр и приложений уже получили прирост производительности, улучшенную графику и передовые функции на базе ИИ, например, NVIDIA DLSS 3.5 с реконструкцией лучей. С технологией RTX

Оцените топовые игры с RTX.

Трассировка лучей

Гиперреализм. Высочайшая скорость.

Архитектура NVIDIA Ada Lovelace раскрывает весь потенциал трассировки лучей, которая моделирует поведение света в реальном мире. Погрузитесь в невероятно детализированные виртуальные миры благодаря производительности карт GeForce RTX 40 и ядер RT третьего поколения.

NVIDIA DLSS 3

Максимум FPS и качества с помощью ИИ

Технология DLSS — это настоящая революция в графике на основе ИИ, повышающая производительность в разы. Полагаясь на новые тензорные ядра четвертого поколения и ускоритель оптического потока в графических процессорах GeForce RTX 40, технология DLSS 3 использует искусственный интеллект для создания дополнительных кадров и повышения качества изображения.

*Снято с использованием GeForce RTX 4090 при разрешении 3840 x 2160 с максимальными игровыми настройками и в режиме RT Overdrive.

NVIDIA Studio

Ваше творчество с ИИ .

Выводите свои творческие проекты на новый уровень с помощью NVIDIA Studio. Снабженные новыми аппаратными возможностями, графические процессоры серии RTX 40 обеспечивают непревзойденную производительность в 3D-рендеринге, видеомонтаже и графическом дизайне. Испытайте ускорение на RTX в популярных графических приложениях, оцените драйверы NVIDIA Studio мирового класса, разработанные для обеспечения максимальной стабильности, и воспользуйтесь набором эксклюзивных инструментов, которые используют все возможности RTX для творческих процессов на основе ИИ.

Bumblebee

Bumblebee is an effort to make NVIDIA Optimus enabled laptops work in GNU/Linux systems. Such feature involves two graphics cards with two different power consumption profiles plugged in a layered way sharing a single framebuffer.

Note: Bumblebee has significant performance issues[1][2]. See NVIDIA Optimus for alternative solutions.

Bumblebee: Optimus for Linux

Optimus Technology is a hybrid graphics implementation without a hardware multiplexer. The integrated GPU manages the display while the dedicated GPU manages the most demanding rendering and ships the work to the integrated GPU to be displayed. When the laptop is running on battery supply, the dedicated GPU is turned off to save power and prolong the battery life. It has also been tested successfully with desktop machines with Intel integrated graphics and an nVidia dedicated graphics card.

Bumblebee is a software implementation comprising two parts:

- Render programs off-screen on the dedicated video card and display it on the screen using the integrated video card. This bridge is provided by VirtualGL or primus (read further) and connects to a X server started for the discrete video card.

- Disable the dedicated video card when it is not in use (see the #Power management section)

It tries to mimic the Optimus technology behavior; using the dedicated GPU for rendering when needed and power it down when not in use. The present releases only support rendering on-demand, automatically starting a program with the discrete video card based on workload is not implemented.

Installation

Before installing Bumblebee, check your BIOS and activate Optimus (older laptops call it «switchable graphics») if possible (BIOS does not have to provide this option). If neither «Optimus» or «switchable» is in the BIOS, still make sure both GPUs will be enabled and that the integrated graphics (igfx) is initial display (primary display). The display should be connected to the onboard integrated graphics, not the discrete graphics card. If integrated graphics had previously been disabled and discrete graphics drivers installed, be sure to remove /etc/X11/xorg.conf or the conf file in /etc/X11/xorg.conf.d related to the discrete graphics card.

- bumblebee — The main package providing the daemon and client programs.

- mesa — An open-source implementation of the OpenGL specification.

- An appropriate version of the NVIDIA driver, see NVIDIA#Installation.

- Optionally install xf86-video-intel — Intel Xorg driver.

For 32-bit application support, enable the multilib repository and install:

- lib32-virtualgl — A render/display bridge for 32 bit applications.

- lib32-nvidia-utils or lib32-nvidia-340xx-utilsAUR (match the version of the regular NVIDIA driver).

In order to use Bumblebee, it is necessary to add your regular user to the bumblebee group:

# gpasswd -a user bumblebee

Also enable bumblebeed.service . Reboot your system and follow #Usage.

- The bumblebee package will install a kernel module blacklist file that prevents the nvidia-drm module from loading on boot. Remember to uninstall this if you later switch away to other solutions.

- The package does not blacklist the nvidiafb module. You probably do not have it installed, because the default kernels do not ship it. However, with other kernels you must explicitly blacklist it too, otherwise optirun and primusrun will not run. See FS#69018.

Usage

Test

Install mesa-utils and use glxgears to test if if Bumblebee works with your Optimus system:

$ optirun glxgears -info

If it fails, try the following commands (from virtualgl ):

$ optirun glxspheres64

If the window with animation shows up, Optimus with Bumblebee is working.

Note: If glxgears failed, but glxspheres64 worked, always replace glxgears with glxspheres64 in all cases.

General usage

$ optirun [options] application [application-parameters]

For example, start Windows applications with Optimus:

$ optirun wine application.exe

For another example, open NVIDIA Settings panel with Optimus:

$ optirun -b none nvidia-settings -c :8

Note: A patched version of nvdock AUR is available in the package nvdock-bumblebee AUR .

For a list of all available options, see optirun(1) .

Configuration

You can configure the behaviour of Bumblebee to fit your needs. Fine tuning like speed optimization, power management and other stuff can be configured in /etc/bumblebee/bumblebee.conf

Optimizing speed

One disadvantage of the offscreen rendering methods is performance. The following table gives a raw overview of a Lenovo ThinkPad T480 in an eGPU setup with NVIDIA GTX 1060 6GB and unigine-heaven AUR benchmark (1920×1080, max settings, 8x AA):

| Command | Display | FPS | Score | Min FPS | Max FPS |

|---|---|---|---|---|---|

| optirun unigine-heaven | internal | 20.7 | 521 | 6.9 | 26.6 |

| primusrun unigine-heaven | internal | 36.9 | 930 | 15.3 | 44.1 |

| unigine-heaven | internal in Nvidia-xrun | 51.3 | 1293 | 8.4 | 95.6 |

| unigine-heaven | external in Nvidia-xrun | 56.1 | 1414 | 8.4 | 111.9 |

Using VirtualGL as bridge

Bumblebee renders frames for your Optimus NVIDIA card in an invisible X Server with VirtualGL and transports them back to your visible X Server. Frames will be compressed before they are transported — this saves bandwidth and can be used for speed-up optimization of bumblebee:

To use another compression method for a single application:

$ optirun -c compress-method application

The method of compress will affect performance in the GPU/CPU usage. Compressed methods will mostly load the CPU. However, uncompressed methods will mostly load the GPU.

Here is a performance table tested with ASUS N550JV laptop and benchmark app unigine-heaven AUR :

| Command | FPS | Score | Min FPS | Max FPS |

|---|---|---|---|---|

| optirun unigine-heaven | 25.0 | 630 | 16.4 | 36.1 |

| optirun -c jpeg unigine-heaven | 24.2 | 610 | 9.5 | 36.8 |

| optirun -c rgb unigine-heaven | 25.1 | 632 | 16.6 | 35.5 |

| optirun -c yuv unigine-heaven | 24.9 | 626 | 16.5 | 35.8 |

| optirun -c proxy unigine-heaven | 25.0 | 629 | 16.0 | 36.1 |

| optirun -c xv unigine-heaven | 22.9 | 577 | 15.4 | 32.2 |

Note: Lag spikes occurred when jpeg compression method was used.

To use a standard compression for all applications, set the VGLTransport to compress-method in /etc/bumblebee/bumblebee.conf :

/etc/bumblebee/bumblebee.conf

[. ] [optirun] VGLTransport=proxy [. ]

You can also play with the way VirtualGL reads back the pixels from your graphic card. Setting VGL_READBACK environment variable to pbo should increase the performance. Compare the following:

PBO should be faster:

VGL_READBACK=pbo optirun glxgears

The default value is sync:

VGL_READBACK=sync optirun glxgears

Note: CPU frequency scaling will affect directly on render performance

Primusrun

Note: Since compositing hurts performance, invoking primus when a compositing WM is active is not recommended. See #Primus issues under compositing window managers.

primusrun (from primus ) is becoming the default choice, because it consumes less power and sometimes provides better performance than optirun / virtualgl . It may be run separately, but it does not accept options as optirun does. Setting primus as the bridge for optirun provides more flexibility.

For 32-bit applications support on 64-bit machines, install lib32-primus (multilib must be enabled).

You can either run it separately:

$ primusrun glxgears

Or as a bridge for optirun. The default configuration sets virtualgl as the bridge. Override that on the command line:

$ optirun -b primus glxgears

Alternatively, set Bridge=primus in /etc/bumblebee/bumblebee.conf and you will not have to specify it on the command line.

Tip: Refer to #Primusrun mouse delay (disable VSYNC) if you want to disable VSYNC . It can also remove mouse input delay lag and slightly increase the performance.

Pvkrun

pvkrun from the package primus_vk is a drop-in replacement for primusrun that enables to run Vulkan-based applications. A quick check can be done with vulkaninfo from vulkan-tools .

$ pvkrun vulkaninfo

Power management

This article or section is a candidate for merging with Hybrid graphics#Using bbswitch.

Notes: This section talks only about bbswitch which is not specific to Bumblebee. (Discuss in Talk:Bumblebee)

The goal of the power management feature is to turn off the NVIDIA card when it is not used by Bumblebee any more. If bbswitch (for linux ) or bbswitch-dkms (for linux-lts or custom kernels) is installed, it will be detected automatically when the Bumblebee daemon starts. No additional configuration is necessary. However, bbswitch is for Optimus laptops only and will not work on desktop computers. So, Bumblebee power management is not available for desktop computers, and there is no reason to install bbswitch on a desktop. (Nevertheless, the other features of Bumblebee do work on some desktop computers.)

To manually turn the card on or off using bbswitch, write into /proc/acpi/bbswitch:

# echo OFF > /proc/acpi/bbswitch # echo ON > /proc/acpi/bbswitch

Default power state of NVIDIA card using bbswitch

The default behavior of bbswitch is to leave the card power state unchanged. bumblebeed does disable the card when started, so the following is only necessary if you use bbswitch without bumblebeed.

Set load_state and unload_state module options according to your needs (see bbswitch documentation).

/etc/modprobe.d/bbswitch.conf

options bbswitch load_state=0 unload_state=1

To run bbswitch without bumblebeed on system startup, do not forget to add bbswitch to /etc/modules-load.d , as explained in Kernel module#systemd.

Enable NVIDIA card during shutdown

On some laptops, the NVIDIA card may not correctly initialize during boot if the card was powered off when the system was last shutdown. Therefore the Bumblebee daemon will power on the GPU when stopping the daemon (e.g. on shutdown) due to the (default) setting TurnCardOffAtExit=false in /etc/bumblebee/bumblebee.conf . Note that this setting does not influence power state while the daemon is running, so if all optirun or primusrun programs have exited, the GPU will still be powered off.

When you stop the daemon manually, you might want to keep the card powered off while still powering it on on shutdown. To achieve the latter, add the following systemd service (if using bbswitch ):

/etc/systemd/system/nvidia-enable.service

[Unit] Description=Enable NVIDIA card DefaultDependencies=no [Service] Type=oneshot ExecStart=/bin/sh -c 'echo ON > /proc/acpi/bbswitch' [Install] WantedBy=shutdown.target

Then enable the nvidia-enable.service unit.

Enable NVIDIA card after waking from suspend

The bumblebee daemon may fail to activate the graphics card after suspending. A possible fix involves setting bbswitch as the default method for power management in /etc/bumblebee/bumblebee.conf :

/etc/bumblebee/bumblebee.conf

[driver-nvidia] PMMethod=bbswitch [driver-nouveau] PMMethod=bbswitch

Note: This fix seems to work only after rebooting the system. Restarting the bumblebee service is not enough.

If the above fix fails, try the following command:

# echo 1 > /sys/bus/pci/rescan

To rescan the PCI bus automatically after a suspend, create a script as described in Power management#Hooks in /usr/lib/systemd/system-sleep.

Multiple monitors

Outputs wired to the Intel chip

If the port (DisplayPort/HDMI/VGA) is wired to the Intel chip, you can set up multiple monitors with xorg.conf. Set them to use the Intel card, but Bumblebee can still use the NVIDIA card. One example configuration is below for two identical screens with 1080p resolution and using the HDMI out.

/etc/X11/xorg.conf

Section "Screen" Identifier "Screen0" Device "intelgpu0" Monitor "Monitor0" DefaultDepth 24 Option "TwinView" "0" SubSection "Display" Depth 24 Modes "1920x1080_60.00" EndSubSection EndSection Section "Screen" Identifier "Screen1" Device "intelgpu1" Monitor "Monitor1" DefaultDepth 24 Option "TwinView" "0" SubSection "Display" Depth 24 Modes "1920x1080_60.00" EndSubSection EndSection Section "Monitor" Identifier "Monitor0" Option "Enable" "true" EndSection Section "Monitor" Identifier "Monitor1" Option "Enable" "true" EndSection Section "Device" Identifier "intelgpu0" Driver "intel" Option "UseEvents" "true" Option "AccelMethod" "UXA" BusID "PCI:0:2:0" EndSection Section "Device" Identifier "intelgpu1" Driver "intel" Option "UseEvents" "true" Option "AccelMethod" "UXA" BusID "PCI:0:2:0" EndSection Section "Device" Identifier "nvidiagpu1" Driver "nvidia" BusID "PCI:0:1:0" EndSection

You need to probably change the BusID for both the Intel and the NVIDIA card.

$ lspci | grep VGA

00:02.0 VGA compatible controller: Intel Corporation 2nd Generation Core Processor Family Integrated Graphics Controller (rev 09)

The BusID is 0:2:0. Note that lspci outputs hexadecimal values, but Xorg expects decimal values.

Output wired to the NVIDIA chip

On some notebooks, the digital Video Output (HDMI or DisplayPort) is hardwired to the NVIDIA chip. If you want to use all the displays on such a system simultaneously, the easiest solution is to use intel-virtual-output, a tool provided in the xf86-video-intel driver set, as of v2.99. It will allow you to extend the existing X session onto other screens, leveraging virtual outputs to work with the discrete graphics card. Usage is as follows:

$ intel-virtual-output [OPTION]. [TARGET_DISPLAY].

-d source display -f keep in foreground (do not detach from console and daemonize) -b start bumblebee -a connect to all local displays (e.g. :1, :2, etc) -S disable use of a singleton and launch a fresh intel-virtual-output process -v all verbose output, implies -f -V specific verbose output, implies -f -h this help

If this command alone does not work, you can try running it with optirun to enable the discrete graphics and allow it to detect the outputs accordingly. This is known to be necessary on Lenovo’s Legion Y720.

$ optirun intel-virtual-output

If no target displays are parsed on the commandline, intel-virtual-output will attempt to connect to any local display. The detected displays will be manageable via any desktop display manager such as xrandr or KDE Display. The tool will also start bumblebee (which may be left as default install). See the Bumblebee wiki page for more information.

When run in a terminal, intel-virtual-output will daemonize itself unless the -f switch is used. Games can be run on the external screen by first exporting the display export DISPLAY=:8 , and then running the game with optirun game_bin , however, cursor and keyboard are not fully captured. Use export DISPLAY=:0 to revert back to standard operation.

If intel-virtual-output does not detect displays, or if a no VIRTUAL outputs on «:0» message is obtained, then create:

/etc/X11/xorg.conf.d/20-intel.conf

Section "Device" Identifier "intelgpu0" Driver "intel" EndSection

which does exist by default, and:

/etc/bumblebee/xorg.conf.nvidia

Section "ServerLayout" Identifier "Layout0" Option "AutoAddDevices" "true" Option "AutoAddGPU" "false" EndSection Section "Device" Identifier "DiscreteNvidia" Driver "nvidia" VendorName "NVIDIA Corporation" Option "ProbeAllGpus" "false" Option "NoLogo" "true" Option "UseEDID" "true" Option "AllowEmptyInitialConfiguration" # Option "UseDisplayDevice" "none" EndSection Section "Screen" Identifier "Screen0" Device "DiscreteNvidia" EndSection

See [3] for further configurations to try. If the laptop screen is stretched and the cursor is misplaced while the external monitor shows only the cursor, try killing any running compositing managers.

If you do not want to use intel-virtual-output, another option is to configure Bumblebee to leave the discrete GPU on and directly configure X to use both the screens, as it will be able to detect them.

As a last resort, you can run 2 X Servers. The first will be using the Intel driver for the notebook’s screen. The second will be started through optirun on the NVIDIA card, to show on the external display. Make sure to disable any display/session manager before manually starting your desktop environment with optirun. Then, you can log in the integrated-graphics powered one.

Disabling screen blanking

You can disable screen blanking when using intel-virtual-output with xset by setting the DISPLAY environment variable appropriately (see DPMS for more info):

$ DISPLAY=:8 xset -dpms s off

Multiple NVIDIA graphics cards or NVIDIA Optimus

If you have multiple NVIDIA graphics cards (eg. when using an eGPU with a laptop with another built in NVIDIA graphics card) or NVIDIA Optimus, you need to make a minor edit to /etc/bumblebee/xorg.conf.nvidia . If this change is not made the daemon may default to using the internal NVIDIA card.

First, determine the BusID of the external card:

$ lspci | grep -E "VGA|3D"

00:02.0 VGA compatible controller: Intel Corporation HD Graphics 530 (rev 06) 01:00.0 3D controller: NVIDIA Corporation GM107M [GeForce GTX 960M] (rev a2) 0b:00.0 VGA compatible controller: NVIDIA Corporation GP104 [GeForce GTX 1070] (rev a1)

In this case, the BusID is 0b:00.0 .

Now edit /etc/bumblebee/xorg.conf.nvidia and add the following line to Section «Device» :

/etc/bumblebee/xorg.conf.nvidia

Section "Device" . BusID "PCI:11:00:0" Option "AllowExternalGpus" "true" # If the GPU is external . EndSection

Note: Notice that the hex 0b became a base10 11 .

Troubleshooting

Note: Please report bugs at Bumblebee-Project’s GitHub tracker as described in its wiki.

[VGL] ERROR: Could not open display :8

There is a known problem with some wine applications that fork and kill the parent process without keeping track of it (for example the free to play online game «Runes of Magic»).

This is a known problem with VirtualGL. As of bumblebee 3.1, so long as you have it installed, you can use Primus as your render bridge:

$ optirun -b primus wine windows program.exe

If this does not work, an alternative walkaround for this problem is:

$ optirun bash $ optirun wine windows program.exe

If using NVIDIA drivers a fix for this problem is to edit /etc/bumblebee/xorg.conf.nvidia and change Option ConnectedMonitor to CRT-0 .

Xlib: extension «GLX» missing on display «:0.0»

If you tried to install the NVIDIA driver from NVIDIA website, this is not going to work.

-

Uninstall that driver in the similar way:

# ./NVIDIA-Linux-*.run --uninstall

# rm /etc/X11/xorg.conf

[ERROR]Cannot access secondary GPU: No devices detected

In some instances, running optirun will return:

[ERROR]Cannot access secondary GPU - error: [XORG] (EE) No devices detected. [ERROR]Aborting because fallback start is disabled.

In this case, you will need to move the file /etc/X11/xorg.conf.d/20-intel.conf to somewhere else, restart the bumblebeed daemon and it should work. If you do need to change some features for the Intel module, a workaround is to merge /etc/X11/xorg.conf.d/20-intel.conf to /etc/X11/xorg.conf .

It could be also necessary to comment the driver line in /etc/X11/xorg.conf.d/10-monitor.conf .

If you are using the nouveau driver you could try switching to the nvidia driver.

You might need to define the NVIDIA card somewhere (e.g. file /etc/bumblebee/xorg.conf.nvidia ), using the correct BusID according to lspci output:

Section "Device" Identifier "nvidiagpu1" Driver "nvidia" BusID "PCI:0:1:0" EndSection

Observe that the format of lspci output is in HEX, while in xorg it is in decimals. So if the output of lspci is, for example, 0a:00.0 the BusID should be PCI:10:0:0 .

NVIDIA(0): Failed to assign any connected display devices to X screen 0

If the console output is:

[ERROR]Cannot access secondary GPU - error: [XORG] (EE) NVIDIA(0): Failed to assign any connected display devices to X screen 0 [ERROR]Aborting because fallback start is disabled.

If the following line in /etc/bumblebee/xorg.conf.nvidia does not exist, you can add it to the «Device» section:

Option "ConnectedMonitor" "DFP"

If it does already exist, you can try changing it to:

Option "ConnectedMonitor" "CRT"

After that, restart the Bumblebee service to apply these changes.

Failed to initialize the NVIDIA GPU at PCI:1:0:0 (GPU fallen off the bus / RmInitAdapter failed!)

Add rcutree.rcu_idle_gp_delay=1 to the kernel parameters of the boot loader configuration (see also the original BBS post for a configuration example).

Failed to initialize the NVIDIA GPU at PCI:1:0:0 (Bumblebee daemon reported: error: [XORG] (EE) NVIDIA(GPU-0))

You might encounter an issue when after resume from sleep, primusrun or optirun command does not work anymore. there are two ways to fix this issue — reboot your system or execute the following command:

# echo 1 > /sys/bus/pci/rescan

And try to test if primusrun or optirun works.

If the above command did not help, try finding your NVIDIA card’s bus ID:

$ lspci | grep VGA

00:02.0 VGA compatible controller: Intel Corporation Core Processor Integrated Graphics Controller (rev 02) 01:00.0 VGA compatible controller: nVidia Corporation Device 0df4 (rev a1)

For example, above command showed 01:00.0 so we use following commands with this bus ID:

# echo 1 > /sys/bus/pci/devices/0000:01:00.0/remove # echo 1 > /sys/bus/pci/rescan

Could not load GPU driver

If the console output is:

[ERROR]Cannot access secondary GPU - error: Could not load GPU driver

and if you try to load the nvidia module:

# modprobe nvidia

modprobe: ERROR: could not insert 'nvidia': Exec format error

This could be because the nvidia driver is out of sync with the Linux kernel, for example if you installed the latest nvidia driver and have not updated the kernel in a while. A full system update , followed by a reboot into the updated kernel, might resolve the issue. If the problem persists you should try manually compiling the nvidia packages against your current kernel, for example with nvidia-dkms or by compiling nvidia from the ABS.

NOUVEAU(0): [drm] failed to set drm interface version

Consider switching to the official nvidia driver. As commented here, nouveau driver has some issues with some cards and bumblebee.

[ERROR]Cannot access secondary GPU — error: X did not start properly

Set the «AutoAddDevices» option to «true» in /etc/bumblebee/xorg.conf.nvidia (see here):

Section "ServerLayout" Identifier "Layout0" Option "AutoAddDevices" "true" Option "AutoAddGPU" "false" EndSection

/dev/dri/card0: failed to set DRM interface version 1.4: Permission denied

This could be worked around by appending following lines in /etc/bumblebee/xorg.conf.nvidia (see here):

Section "Screen" Identifier "Default Screen" Device "DiscreteNvidia" EndSection

ERROR: ld.so: object ‘libdlfaker.so’ from LD_PRELOAD cannot be preloaded: ignored

You probably want to start a 32-bit application with bumblebee on a 64-bit system. See the «For 32-bit. » section in #Installation. If the problem persists or if it is a 64-bit application, try using the primus bridge.

Fatal IO error 11 (Resource temporarily unavailable) on X server

Change KeepUnusedXServer in /etc/bumblebee/bumblebee.conf from false to true . Your program forks into background and bumblebee do not know anything about it.

Video tearing

Video tearing is a somewhat common problem on Bumblebee. To fix it, you need to enable vsync. It should be enabled by default on the Intel card, but verify that from Xorg logs. To check whether or not it is enabled for NVIDIA, make sure nvidia-settings is installed and run:

$ optirun nvidia-settings -c :8

X Server XVideo Settings -> Sync to VBlank and OpenGL Settings -> Sync to VBlank should both be enabled. The Intel card has in general less tearing, so use it for video playback. Especially use VA-API for video decoding (e.g. mplayer-vaapi and with -vsync parameter).

Refer to Intel graphics#Tearing on how to fix tearing on the Intel card.

If it is still not fixed, try to disable compositing from your desktop environment. Try also disabling triple buffering.

Bumblebee cannot connect to socket

You might get something like:

$ optirun glxspheres64

$ optirun glxspheres32

[ 1648.179533] [ERROR]You have no permission to communicate with the Bumblebee daemon. Try adding yourself to the 'bumblebee' group [ 1648.179628] [ERROR]Could not connect to bumblebee daemon - is it running?

If you are already in the bumblebee group ( groups | grep bumblebee ), you may try removing the socket /var/run/bumblebeed.socket .

Another reason for this error could be that you have not actually turned on both GPUs in your BIOS, and as a result, the Bumblebee daemon is in fact not running. Check the BIOS settings carefully and be sure Intel graphics (integrated graphics — may be abbreviated in BIOS as something like igfx) has been enabled or set to auto, and that it is the primary GPU. Your display should be connected to the onboard integrated graphics, not the discrete graphics card.

If you mistakenly had the display connected to the discrete graphics card and Intel graphics was disabled, you probably installed Bumblebee after first trying to run NVIDIA alone. In this case, be sure to remove the /etc/X11/xorg.conf or /etc/X11/xorg.conf.d/20-nvidia.conf configuration files. If Xorg is instructed to use NVIDIA in a configuration file, X will fail.

Running X.org from console after login (rootless X.org)

Using Primus causes a segmentation fault

In some instances, using primusrun instead of optirun will result in a segfault. This is caused by an issue in code auto-detecting faster upload method, see FS#58933.

The workaround is skipping auto-detection by manually setting PRIMUS_UPLOAD environment variable to either 1 or 2, depending on which one is faster on your setup.

$ PRIMUS_UPLOAD=1 primusrun .

Primusrun mouse delay (disable VSYNC)

For primusrun , VSYNC is enabled by default and as a result, it could make mouse input delay lag or even slightly decrease performance. Test primusrun with VSYNC disabled:

$ vblank_mode=0 primusrun glxgears

If you are satisfied with the above setting, create an alias (e.g. alias primusrun=»vblank_mode=0 primusrun» ).

| VSYNC enabled | FPS | Score | Min FPS | Max FPS |

|---|---|---|---|---|

| FALSE | 31.5 | 793 | 22.3 | 54.8 |

| TRUE | 31.4 | 792 | 18.7 | 54.2 |

Tested with ASUS N550JV notebook and benchmark app unigine-heaven AUR .

Note: To disable vertical synchronization system-wide, see Intel graphics#Disable Vertical Synchronization (VSYNC).

Primus issues under compositing window managers

Since compositing hurts performance, invoking primus when a compositing WM is active is not recommended.[4] If you need to use primus with compositing and see flickering or bad performance, synchronizing primus’ display thread with the application’s rendering thread may help:

$ PRIMUS_SYNC=1 primusrun .

This makes primus display the previously rendered frame.

Problems with bumblebee after resuming from standby

In some systems, it can happens that the nvidia module is loaded after resuming from standby. One possible solution for this is to install the acpi_call and acpi package.

Optirun does not work, no debug output

Users are reporting that in some cases, even though Bumblebee was installed correctly, running

$ optirun glxgears -info

gives no output at all, and the glxgears window does not appear. Any programs that need 3d acceleration crashes:

$ optirun bash $ glxgears Segmentation fault (core dumped)

Apparently it is a bug of some versions of virtualgl. So a workaround is to install primus and lib32-primus and use it instead:

$ primusrun glxspheres64 $ optirun -b primus glxspheres64

By default primus locks the framerate to the vrate of your monitor (usually 60 fps), if needed it can be unlocked by passing the vblank_mode=0 environment variable.

$ vblank_mode=0 primusrun glxspheres64

Usually there is no need to display more frames han your monitor can handle, but you might want to for benchmarking or to have faster reactions in games (e.g., if a game need 3 frames to react to a mouse movement with vblank_mode=0 the reaction will be as quick as your system can handle, without it will always need 1/20 of second).

You might want to edit /etc/bumblebee/bumblebee.conf to use the primus render as default. If after an update you want to check if the bug has been fixed just use optirun -b virtualgl .

See this forum post for more information.

Broken power management with kernel 4.8

This article or section is a candidate for merging with Hybrid graphics#Using bbswitch.

Notes: Keep all info about bbswitch in one place. (Discuss in Talk:Bumblebee)

If you have a newer laptop (BIOS date 2015 or newer), then Linux 4.8 might break bbswitch (bbswitch issue 140) since bbswitch does not support the newer, recommended power management method. As a result, the GPU may fail to power on, fail to power off or worse.

As a workaround, add pcie_port_pm=off to your Kernel parameters.

Alternatively, if you are only interested in power saving (and perhaps use of external monitors), remove bbswitch and rely on Nouveau runtime power-management (which supports the new method).

Note: Some tools such as powertop —auto-tune automatically enable power management on PCI devices, which leads to the same problem [5]. Use the same workaround or do not use the all-in-one power management tools.

Lockup issue (lspci hangs)

See NVIDIA Optimus#Lockup issue (lspci hangs) for an issue that affects new laptops with a GTX 965M (or alike).

Discrete card always on and acpi warnings

Add acpi_osi=Linux to your Kernel parameters. See [6] and [7] for more information.

Screen 0 deleted because of no matching config section

Modify the configuration as follows:

/etc/bumblebee/xorg.conf.nvidia

. Section "ServerLayout" . Screen 0 "nvidia" . EndSection . Section "Screen" Identifier "nvidia" Device "DiscreteNvidia" EndSection .

Erratic, unpredictable behaviour

If Bumblebee starts/works in a random manner, check that you have set your Network configuration#Local network hostname resolution (details here).

Discrete card always on and nvidia driver cannot be unloaded

Make sure nvidia-persistenced.service is disabled and not currently active. It is intended to keep the nvidia driver running at all times [8], which prevents the card being turned off.

Discrete card is silently activated when EGL is requested by some application

If the discrete card is activated by some program (e.g. mpv with its GPU backend), it might stays on. The problem might be libglvnd which is loading the nvidia drivers and activating the card.

To disable this set environment variable __EGL_VENDOR_LIBRARY_FILENAMES (see documentation) to only load mesa configuration file:

__EGL_VENDOR_LIBRARY_FILENAMES="/usr/share/glvnd/egl_vendor.d/50_mesa.json"

nvidia-utils (and its branches) is installing the configuration file at /usr/share/glvnd/egl_vendor.d/10_nvidia.json which has priority and causes libglvnd to load the nvidia drivers and enable the card.

The other solution is to avoid installing the configuration file provided by nvidia-utils .

Framerate drops to 1 FPS after a fixed period of time

With the nvidia 440.36 driver, the DPMS setting is enabled by default resulting in a timeout after a fixed period of time (e.g. 10 minutes) which causes the frame rate to throttle down to 1 FPS. To work around this, add the following line to the «Device» section in /etc/bumblebee/xorg.conf.nvidia

Option "HardDPMS" "false"

Application cannot record screen

Using Bumblebee, applications cannot access the screen to identify and record it. This happens, for example, using obs-studio with NVENC activated. To solve this, disable the bridging mode with optirun -b none command .

See also

- Bumblebee project repository [dead link 2022-09-17 ⓘ]

- Bumblebee project wiki

- Bumblebee project bbswitch repository